Merge branch 'main-fix' into main

@@ -101,7 +101,7 @@

|

||||

|

||||

<div align="center">

|

||||

<a href="https://www.bilibili.com/video/BV1amAneGE3P" target="_blank">

|

||||

<img src="docs/video.png" width="300" alt="麦麦演示视频">

|

||||

<img src="docs/pic/video.png" width="300" alt="麦麦演示视频">

|

||||

<br>

|

||||

👆 点击观看麦麦演示视频 👆

|

||||

|

||||

@@ -149,6 +149,8 @@ MaiMBot是一个开源项目,我们非常欢迎你的参与。你的贡献,

|

||||

|

||||

- [📦 Linux 手动部署指南 ](docs/manual_deploy_linux.md)

|

||||

|

||||

- [📦 macOS 手动部署指南 ](docs/manual_deploy_macos.md)

|

||||

|

||||

如果你不知道Docker是什么,建议寻找相关教程或使用手动部署 **(现在不建议使用docker,更新慢,可能不适配)**

|

||||

|

||||

- [🐳 Docker部署指南](docs/docker_deploy.md)

|

||||

|

||||

4

bot.py

@@ -221,7 +221,9 @@ def check_eula():

|

||||

# 如果EULA或隐私条款有更新,提示用户重新确认

|

||||

if eula_updated or privacy_updated:

|

||||

print("EULA或隐私条款内容已更新,请在阅读后重新确认,继续运行视为同意更新后的以上两款协议")

|

||||

print(f'输入"同意"或"confirmed"或设置环境变量"EULA_AGREE={eula_new_hash}"和"PRIVACY_AGREE={privacy_new_hash}"继续运行')

|

||||

print(

|

||||

f'输入"同意"或"confirmed"或设置环境变量"EULA_AGREE={eula_new_hash}"和"PRIVACY_AGREE={privacy_new_hash}"继续运行'

|

||||

)

|

||||

while True:

|

||||

user_input = input().strip().lower()

|

||||

if user_input in ["同意", "confirmed"]:

|

||||

|

||||

@@ -10,7 +10,7 @@

|

||||

|

||||

- 为什么显示:"缺失必要的API KEY" ❓

|

||||

|

||||

<img src="API_KEY.png" width=650>

|

||||

<img src="./pic/API_KEY.png" width=650>

|

||||

|

||||

>你需要在 [Silicon Flow Api](https://cloud.siliconflow.cn/account/ak) 网站上注册一个账号,然后点击这个链接打开API KEY获取页面。

|

||||

>

|

||||

@@ -41,19 +41,19 @@

|

||||

|

||||

>打开你的MongoDB Compass软件,你会在左上角看到这样的一个界面:

|

||||

>

|

||||

><img src="MONGO_DB_0.png" width=250>

|

||||

><img src="./pic/MONGO_DB_0.png" width=250>

|

||||

>

|

||||

><br>

|

||||

>

|

||||

>点击 "CONNECT" 之后,点击展开 MegBot 标签栏

|

||||

>

|

||||

><img src="MONGO_DB_1.png" width=250>

|

||||

><img src="./pic/MONGO_DB_1.png" width=250>

|

||||

>

|

||||

><br>

|

||||

>

|

||||

>点进 "emoji" 再点击 "DELETE" 删掉所有条目,如图所示

|

||||

>

|

||||

><img src="MONGO_DB_2.png" width=450>

|

||||

><img src="./pic/MONGO_DB_2.png" width=450>

|

||||

>

|

||||

><br>

|

||||

>

|

||||

|

||||

@@ -147,9 +147,7 @@ enable_check = false # 是否要检查表情包是不是合适的喵

|

||||

check_prompt = "符合公序良俗" # 检查表情包的标准呢

|

||||

|

||||

[others]

|

||||

enable_advance_output = true # 是否要显示更多的运行信息呢

|

||||

enable_kuuki_read = true # 让机器人能够"察言观色"喵

|

||||

enable_debug_output = false # 是否启用调试输出喵

|

||||

enable_friend_chat = false # 是否启用好友聊天喵

|

||||

|

||||

[groups]

|

||||

|

||||

@@ -115,9 +115,7 @@ talk_frequency_down = [] # 降低回复频率的群号

|

||||

ban_user_id = [] # 禁止回复的用户QQ号

|

||||

|

||||

[others]

|

||||

enable_advance_output = true # 是否启用高级输出

|

||||

enable_kuuki_read = true # 是否启用读空气功能

|

||||

enable_debug_output = false # 是否启用调试输出

|

||||

enable_friend_chat = false # 是否启用好友聊天

|

||||

|

||||

# 模型配置

|

||||

|

||||

@@ -1,48 +1,51 @@

|

||||

# 面向纯新手的Linux服务器麦麦部署指南

|

||||

|

||||

## 你得先有一个服务器

|

||||

|

||||

为了能使麦麦在你的电脑关机之后还能运行,你需要一台不间断开机的主机,也就是我们常说的服务器。

|

||||

## 事前准备

|

||||

为了能使麦麦不间断的运行,你需要一台一直开着的主机。

|

||||

|

||||

### 如果你想购买服务器

|

||||

华为云、阿里云、腾讯云等等都是在国内可以选择的选择。

|

||||

|

||||

你可以去租一台最低配置的就足敷需要了,按月租大概十几块钱就能租到了。

|

||||

租一台最低配置的就足敷需要了,按月租大概十几块钱就能租到了。

|

||||

|

||||

我们假设你已经租好了一台Linux架构的云服务器。我用的是阿里云ubuntu24.04,其他的原理相似。

|

||||

### 如果你不想购买服务器

|

||||

你可以准备一台可以一直开着的电脑/主机,只需要保证能够正常访问互联网即可

|

||||

|

||||

我们假设你已经有了一台Linux架构的服务器。举例使用的是Ubuntu24.04,其他的原理相似。

|

||||

|

||||

## 0.我们就从零开始吧

|

||||

|

||||

### 网络问题

|

||||

|

||||

为访问github相关界面,推荐去下一款加速器,新手可以试试watttoolkit。

|

||||

为访问Github相关界面,推荐去下一款加速器,新手可以试试[Watt Toolkit](https://gitee.com/rmbgame/SteamTools/releases/latest)。

|

||||

|

||||

### 安装包下载

|

||||

|

||||

#### MongoDB

|

||||

进入[MongoDB下载页](https://www.mongodb.com/try/download/community-kubernetes-operator),并选择版本

|

||||

|

||||

对于ubuntu24.04 x86来说是这个:

|

||||

以Ubuntu24.04 x86为例,保持如图所示选项,点击`Download`即可,如果是其他系统,请在`Platform`中自行选择:

|

||||

|

||||

https://repo.mongodb.org/apt/ubuntu/dists/noble/mongodb-org/8.0/multiverse/binary-amd64/mongodb-org-server_8.0.5_amd64.deb

|

||||

|

||||

|

||||

如果不是就在这里自行选择对应版本

|

||||

|

||||

https://www.mongodb.com/try/download/community-kubernetes-operator

|

||||

不想使用上述方式?你也可以参考[官方文档](https://www.mongodb.com/zh-cn/docs/manual/administration/install-on-linux/#std-label-install-mdb-community-edition-linux)进行安装,进入后选择自己的系统版本即可

|

||||

|

||||

#### Napcat

|

||||

|

||||

在这里选择对应版本。

|

||||

|

||||

https://github.com/NapNeko/NapCatQQ/releases/tag/v4.6.7

|

||||

|

||||

对于ubuntu24.04 x86来说是这个:

|

||||

|

||||

https://dldir1.qq.com/qqfile/qq/QQNT/ee4bd910/linuxqq_3.2.16-32793_amd64.deb

|

||||

#### QQ(可选)/Napcat

|

||||

*如果你使用Napcat的脚本安装,可以忽略此步*

|

||||

访问https://github.com/NapNeko/NapCatQQ/releases/latest

|

||||

在图中所示区域可以找到QQ的下载链接,选择对应版本下载即可

|

||||

从这里下载,可以保证你下载到的QQ版本兼容最新版Napcat

|

||||

|

||||

如果你不想使用Napcat的脚本安装,还需参考[Napcat-Linux手动安装](https://www.napcat.wiki/guide/boot/Shell-Linux-SemiAuto)

|

||||

|

||||

#### 麦麦

|

||||

|

||||

https://github.com/SengokuCola/MaiMBot/archive/refs/tags/0.5.8-alpha.zip

|

||||

|

||||

下载这个官方压缩包。

|

||||

先打开https://github.com/MaiM-with-u/MaiBot/releases

|

||||

往下滑找到这个

|

||||

|

||||

下载箭头所指这个压缩包。

|

||||

|

||||

### 路径

|

||||

|

||||

@@ -53,10 +56,10 @@ https://github.com/SengokuCola/MaiMBot/archive/refs/tags/0.5.8-alpha.zip

|

||||

```

|

||||

moi

|

||||

└─ mai

|

||||

├─ linuxqq_3.2.16-32793_amd64.deb

|

||||

├─ mongodb-org-server_8.0.5_amd64.deb

|

||||

├─ linuxqq_3.2.16-32793_amd64.deb # linuxqq安装包

|

||||

├─ mongodb-org-server_8.0.5_amd64.deb # MongoDB的安装包

|

||||

└─ bot

|

||||

└─ MaiMBot-0.5.8-alpha.zip

|

||||

└─ MaiMBot-0.5.8-alpha.zip # 麦麦的压缩包

|

||||

```

|

||||

|

||||

### 网络

|

||||

@@ -69,7 +72,7 @@ moi

|

||||

|

||||

## 2. Python的安装

|

||||

|

||||

- 导入 Python 的稳定版 PPA:

|

||||

- 导入 Python 的稳定版 PPA(Ubuntu需执行此步,Debian可忽略):

|

||||

|

||||

```bash

|

||||

sudo add-apt-repository ppa:deadsnakes/ppa

|

||||

@@ -92,6 +95,11 @@ sudo apt install python3.12

|

||||

```bash

|

||||

python3.12 --version

|

||||

```

|

||||

- (可选)更新替代方案,设置 python3.12 为默认的 python3 版本:

|

||||

```bash

|

||||

sudo update-alternatives --install /usr/bin/python3 python3 /usr/bin/python3.12 1

|

||||

sudo update-alternatives --config python3

|

||||

```

|

||||

|

||||

- 在「终端」中,执行以下命令安装 pip:

|

||||

|

||||

@@ -141,23 +149,17 @@ systemctl status mongod #通过这条指令检查运行状态

|

||||

sudo systemctl enable mongod

|

||||

```

|

||||

|

||||

## 5.napcat的安装

|

||||

## 5.Napcat的安装

|

||||

|

||||

``` bash

|

||||

# 该脚本适用于支持Ubuntu 20+/Debian 10+/Centos9

|

||||

curl -o napcat.sh https://nclatest.znin.net/NapNeko/NapCat-Installer/main/script/install.sh && sudo bash napcat.sh

|

||||

```

|

||||

|

||||

上面的不行试试下面的

|

||||

|

||||

``` bash

|

||||

dpkg -i linuxqq_3.2.16-32793_amd64.deb

|

||||

apt-get install -f

|

||||

dpkg -i linuxqq_3.2.16-32793_amd64.deb

|

||||

```

|

||||

执行后,脚本会自动帮你部署好QQ及Napcat

|

||||

|

||||

成功的标志是输入``` napcat ```出来炫酷的彩虹色界面

|

||||

|

||||

## 6.napcat的运行

|

||||

## 6.Napcat的运行

|

||||

|

||||

此时你就可以根据提示在```napcat```里面登录你的QQ号了。

|

||||

|

||||

@@ -170,6 +172,13 @@ napcat status #检查运行状态

|

||||

|

||||

```http://<你服务器的公网IP>:6099/webui?token=napcat```

|

||||

|

||||

如果你部署在自己的电脑上:

|

||||

```http://127.0.0.1:6099/webui?token=napcat```

|

||||

|

||||

> [!WARNING]

|

||||

> 如果你的麦麦部署在公网,请**务必**修改Napcat的默认密码

|

||||

|

||||

|

||||

第一次是这个,后续改了密码之后token就会对应修改。你也可以使用```napcat log <你的QQ号>```来查看webui地址。把里面的```127.0.0.1```改成<你服务器的公网IP>即可。

|

||||

|

||||

登录上之后在网络配置界面添加websocket客户端,名称随便输一个,url改成`ws://127.0.0.1:8080/onebot/v11/ws`保存之后点启用,就大功告成了。

|

||||

@@ -178,7 +187,7 @@ napcat status #检查运行状态

|

||||

|

||||

### step 1 安装解压软件

|

||||

|

||||

```

|

||||

```bash

|

||||

sudo apt-get install unzip

|

||||

```

|

||||

|

||||

@@ -229,138 +238,11 @@ bot

|

||||

|

||||

你可以注册一个硅基流动的账号,通过邀请码注册有14块钱的免费额度:https://cloud.siliconflow.cn/i/7Yld7cfg。

|

||||

|

||||

#### 在.env.prod中定义API凭证:

|

||||

#### 修改配置文件

|

||||

请参考

|

||||

- [🎀 新手配置指南](./installation_cute.md) - 通俗易懂的配置教程,适合初次使用的猫娘

|

||||

- [⚙️ 标准配置指南](./installation_standard.md) - 简明专业的配置说明,适合有经验的用户

|

||||

|

||||

```

|

||||

# API凭证配置

|

||||

SILICONFLOW_KEY=your_key # 硅基流动API密钥

|

||||

SILICONFLOW_BASE_URL=https://api.siliconflow.cn/v1/ # 硅基流动API地址

|

||||

|

||||

DEEP_SEEK_KEY=your_key # DeepSeek API密钥

|

||||

DEEP_SEEK_BASE_URL=https://api.deepseek.com/v1 # DeepSeek API地址

|

||||

|

||||

CHAT_ANY_WHERE_KEY=your_key # ChatAnyWhere API密钥

|

||||

CHAT_ANY_WHERE_BASE_URL=https://api.chatanywhere.tech/v1 # ChatAnyWhere API地址

|

||||

```

|

||||

|

||||

#### 在bot_config.toml中引用API凭证:

|

||||

|

||||

```

|

||||

[model.llm_reasoning]

|

||||

name = "Pro/deepseek-ai/DeepSeek-R1"

|

||||

base_url = "SILICONFLOW_BASE_URL" # 引用.env.prod中定义的地址

|

||||

key = "SILICONFLOW_KEY" # 引用.env.prod中定义的密钥

|

||||

```

|

||||

|

||||

如需切换到其他API服务,只需修改引用:

|

||||

|

||||

```

|

||||

[model.llm_reasoning]

|

||||

name = "Pro/deepseek-ai/DeepSeek-R1"

|

||||

base_url = "DEEP_SEEK_BASE_URL" # 切换为DeepSeek服务

|

||||

key = "DEEP_SEEK_KEY" # 使用DeepSeek密钥

|

||||

```

|

||||

|

||||

#### 配置文件详解

|

||||

|

||||

##### 环境配置文件 (.env.prod)

|

||||

|

||||

```

|

||||

# API配置

|

||||

SILICONFLOW_KEY=your_key

|

||||

SILICONFLOW_BASE_URL=https://api.siliconflow.cn/v1/

|

||||

DEEP_SEEK_KEY=your_key

|

||||

DEEP_SEEK_BASE_URL=https://api.deepseek.com/v1

|

||||

CHAT_ANY_WHERE_KEY=your_key

|

||||

CHAT_ANY_WHERE_BASE_URL=https://api.chatanywhere.tech/v1

|

||||

|

||||

# 服务配置

|

||||

HOST=127.0.0.1 # 如果使用Docker部署,需要改成0.0.0.0,否则QQ消息无法传入

|

||||

PORT=8080

|

||||

|

||||

# 数据库配置

|

||||

MONGODB_HOST=127.0.0.1 # 如果使用Docker部署,需要改成数据库容器的名字,默认是mongodb

|

||||

MONGODB_PORT=27017

|

||||

DATABASE_NAME=MegBot

|

||||

MONGODB_USERNAME = "" # 数据库用户名

|

||||

MONGODB_PASSWORD = "" # 数据库密码

|

||||

MONGODB_AUTH_SOURCE = "" # 认证数据库

|

||||

|

||||

# 插件配置

|

||||

PLUGINS=["src2.plugins.chat"]

|

||||

```

|

||||

|

||||

##### 机器人配置文件 (bot_config.toml)

|

||||

|

||||

```

|

||||

[bot]

|

||||

qq = "机器人QQ号" # 必填

|

||||

nickname = "麦麦" # 机器人昵称(你希望机器人怎么称呼它自己)

|

||||

|

||||

[personality]

|

||||

prompt_personality = [

|

||||

"曾经是一个学习地质的女大学生,现在学习心理学和脑科学,你会刷贴吧",

|

||||

"是一个女大学生,你有黑色头发,你会刷小红书"

|

||||

]

|

||||

prompt_schedule = "一个曾经学习地质,现在学习心理学和脑科学的女大学生,喜欢刷qq,贴吧,知乎和小红书"

|

||||

|

||||

[message]

|

||||

min_text_length = 2 # 最小回复长度

|

||||

max_context_size = 15 # 上下文记忆条数

|

||||

emoji_chance = 0.2 # 表情使用概率

|

||||

ban_words = [] # 禁用词列表

|

||||

|

||||

[emoji]

|

||||

auto_save = true # 自动保存表情

|

||||

enable_check = false # 启用表情审核

|

||||

check_prompt = "符合公序良俗"

|

||||

|

||||

[groups]

|

||||

talk_allowed = [] # 允许对话的群号

|

||||

talk_frequency_down = [] # 降低回复频率的群号

|

||||

ban_user_id = [] # 禁止回复的用户QQ号

|

||||

|

||||

[others]

|

||||

enable_advance_output = true # 启用详细日志

|

||||

enable_kuuki_read = true # 启用场景理解

|

||||

|

||||

# 模型配置

|

||||

[model.llm_reasoning] # 推理模型

|

||||

name = "Pro/deepseek-ai/DeepSeek-R1"

|

||||

base_url = "SILICONFLOW_BASE_URL"

|

||||

key = "SILICONFLOW_KEY"

|

||||

|

||||

[model.llm_reasoning_minor] # 轻量推理模型

|

||||

name = "deepseek-ai/DeepSeek-R1-Distill-Qwen-32B"

|

||||

base_url = "SILICONFLOW_BASE_URL"

|

||||

key = "SILICONFLOW_KEY"

|

||||

|

||||

[model.llm_normal] # 对话模型

|

||||

name = "Pro/deepseek-ai/DeepSeek-V3"

|

||||

base_url = "SILICONFLOW_BASE_URL"

|

||||

key = "SILICONFLOW_KEY"

|

||||

|

||||

[model.llm_normal_minor] # 备用对话模型

|

||||

name = "deepseek-ai/DeepSeek-V2.5"

|

||||

base_url = "SILICONFLOW_BASE_URL"

|

||||

key = "SILICONFLOW_KEY"

|

||||

|

||||

[model.vlm] # 图像识别模型

|

||||

name = "deepseek-ai/deepseek-vl2"

|

||||

base_url = "SILICONFLOW_BASE_URL"

|

||||

key = "SILICONFLOW_KEY"

|

||||

|

||||

[model.embedding] # 文本向量模型

|

||||

name = "BAAI/bge-m3"

|

||||

base_url = "SILICONFLOW_BASE_URL"

|

||||

key = "SILICONFLOW_KEY"

|

||||

|

||||

|

||||

[topic.llm_topic]

|

||||

name = "Pro/deepseek-ai/DeepSeek-V3"

|

||||

base_url = "SILICONFLOW_BASE_URL"

|

||||

key = "SILICONFLOW_KEY"

|

||||

```

|

||||

|

||||

**step # 6** 运行

|

||||

|

||||

@@ -438,7 +320,7 @@ sudo systemctl enable bot.service # 启动bot服务

|

||||

sudo systemctl status bot.service # 检查bot服务状态

|

||||

```

|

||||

|

||||

```

|

||||

python bot.py

|

||||

```bash

|

||||

python bot.py # 运行麦麦

|

||||

```

|

||||

|

||||

|

||||

@@ -6,7 +6,7 @@

|

||||

- QQ小号(QQ框架的使用可能导致qq被风控,严重(小概率)可能会导致账号封禁,强烈不推荐使用大号)

|

||||

- 可用的大模型API

|

||||

- 一个AI助手,网上随便搜一家打开来用都行,可以帮你解决一些不懂的问题

|

||||

- 以下内容假设你对Linux系统有一定的了解,如果觉得难以理解,请直接用Windows系统部署[Windows系统部署指南](./manual_deploy_windows.md)

|

||||

- 以下内容假设你对Linux系统有一定的了解,如果觉得难以理解,请直接用Windows系统部署[Windows系统部署指南](./manual_deploy_windows.md)或[使用Windows一键包部署](https://github.com/MaiM-with-u/MaiBot/releases/tag/EasyInstall-windows)

|

||||

|

||||

## 你需要知道什么?

|

||||

|

||||

@@ -24,6 +24,9 @@

|

||||

|

||||

---

|

||||

|

||||

## 一键部署

|

||||

请下载并运行项目根目录中的run.sh并按照提示安装,部署完成后请参照后续配置指南进行配置

|

||||

|

||||

## 环境配置

|

||||

|

||||

### 1️⃣ **确认Python版本**

|

||||

@@ -36,17 +39,26 @@ python --version

|

||||

python3 --version

|

||||

```

|

||||

|

||||

如果版本低于3.9,请更新Python版本。

|

||||

如果版本低于3.9,请更新Python版本,目前建议使用python3.12

|

||||

|

||||

```bash

|

||||

# Ubuntu/Debian

|

||||

# Debian

|

||||

sudo apt update

|

||||

sudo apt install python3.9

|

||||

# 如执行了这一步,建议在执行时将python3指向python3.9

|

||||

# 更新替代方案,设置 python3.9 为默认的 python3 版本:

|

||||

sudo update-alternatives --install /usr/bin/python3 python3 /usr/bin/python3.9 1

|

||||

sudo apt install python3.12

|

||||

# Ubuntu

|

||||

sudo add-apt-repository ppa:deadsnakes/ppa

|

||||

sudo apt update

|

||||

sudo apt install python3.12

|

||||

|

||||

# 执行完以上命令后,建议在执行时将python3指向python3.12

|

||||

# 更新替代方案,设置 python3.12 为默认的 python3 版本:

|

||||

sudo update-alternatives --install /usr/bin/python3 python3 /usr/bin/python3.12 1

|

||||

sudo update-alternatives --config python3

|

||||

```

|

||||

建议再执行以下命令,使后续运行命令中的`python3`等同于`python`

|

||||

```bash

|

||||

sudo apt install python-is-python3

|

||||

```

|

||||

|

||||

### 2️⃣ **创建虚拟环境**

|

||||

|

||||

@@ -73,7 +85,7 @@ pip install -r requirements.txt

|

||||

|

||||

### 3️⃣ **安装并启动MongoDB**

|

||||

|

||||

- 安装与启动:Debian参考[官方文档](https://docs.mongodb.com/manual/tutorial/install-mongodb-on-debian/),Ubuntu参考[官方文档](https://docs.mongodb.com/manual/tutorial/install-mongodb-on-ubuntu/)

|

||||

- 安装与启动:请参考[官方文档](https://www.mongodb.com/zh-cn/docs/manual/administration/install-on-linux/#std-label-install-mdb-community-edition-linux),进入后选择自己的系统版本即可

|

||||

- 默认连接本地27017端口

|

||||

|

||||

---

|

||||

@@ -82,7 +94,11 @@ pip install -r requirements.txt

|

||||

|

||||

### 4️⃣ **安装NapCat框架**

|

||||

|

||||

- 参考[NapCat官方文档](https://www.napcat.wiki/guide/boot/Shell#napcat-installer-linux%E4%B8%80%E9%94%AE%E4%BD%BF%E7%94%A8%E8%84%9A%E6%9C%AC-%E6%94%AF%E6%8C%81ubuntu-20-debian-10-centos9)安装

|

||||

- 执行NapCat的Linux一键使用脚本(支持Ubuntu 20+/Debian 10+/Centos9)

|

||||

```bash

|

||||

curl -o napcat.sh https://nclatest.znin.net/NapNeko/NapCat-Installer/main/script/install.sh && sudo bash napcat.sh

|

||||

```

|

||||

- 如果你不想使用Napcat的脚本安装,可参考[Napcat-Linux手动安装](https://www.napcat.wiki/guide/boot/Shell-Linux-SemiAuto)

|

||||

|

||||

- 使用QQ小号登录,添加反向WS地址: `ws://127.0.0.1:8080/onebot/v11/ws`

|

||||

|

||||

@@ -91,9 +107,17 @@ pip install -r requirements.txt

|

||||

## 配置文件设置

|

||||

|

||||

### 5️⃣ **配置文件设置,让麦麦Bot正常工作**

|

||||

|

||||

- 修改环境配置文件:`.env.prod`

|

||||

- 修改机器人配置文件:`bot_config.toml`

|

||||

可先运行一次

|

||||

```bash

|

||||

# 在项目目录下操作

|

||||

nb run

|

||||

# 或

|

||||

python3 bot.py

|

||||

```

|

||||

之后你就可以找到`.env.prod`和`bot_config.toml`这两个文件了

|

||||

关于文件内容的配置请参考:

|

||||

- [🎀 新手配置指南](./installation_cute.md) - 通俗易懂的配置教程,适合初次使用的猫娘

|

||||

- [⚙️ 标准配置指南](./installation_standard.md) - 简明专业的配置说明,适合有经验的用户

|

||||

|

||||

---

|

||||

|

||||

|

||||

201

docs/manual_deploy_macos.md

Normal file

@@ -0,0 +1,201 @@

|

||||

# 📦 macOS系统手动部署MaiMbot麦麦指南

|

||||

|

||||

## 准备工作

|

||||

|

||||

- 一台搭载了macOS系统的设备(macOS 12.0 或以上)

|

||||

- QQ小号(QQ框架的使用可能导致qq被风控,严重(小概率)可能会导致账号封禁,强烈不推荐使用大号)

|

||||

- Homebrew包管理器

|

||||

- 如未安装,你可以在https://github.com/Homebrew/brew/releases/latest 找到.pkg格式的安装包

|

||||

- 可用的大模型API

|

||||

- 一个AI助手,网上随便搜一家打开来用都行,可以帮你解决一些不懂的问题

|

||||

- 以下内容假设你对macOS系统有一定的了解,如果觉得难以理解,请直接用Windows系统部署[Windows系统部署指南](./manual_deploy_windows.md)或[使用Windows一键包部署](https://github.com/MaiM-with-u/MaiBot/releases/tag/EasyInstall-windows)

|

||||

- 终端应用(iTerm2等)

|

||||

|

||||

---

|

||||

|

||||

## 环境配置

|

||||

|

||||

### 1️⃣ **Python环境配置**

|

||||

|

||||

```bash

|

||||

# 检查Python版本(macOS自带python可能为2.7)

|

||||

python3 --version

|

||||

|

||||

# 通过Homebrew安装Python

|

||||

brew install python@3.12

|

||||

|

||||

# 设置环境变量(如使用zsh)

|

||||

echo 'export PATH="/usr/local/opt/python@3.12/bin:$PATH"' >> ~/.zshrc

|

||||

source ~/.zshrc

|

||||

|

||||

# 验证安装

|

||||

python3 --version # 应显示3.12.x

|

||||

pip3 --version # 应关联3.12版本

|

||||

```

|

||||

|

||||

### 2️⃣ **创建虚拟环境**

|

||||

|

||||

```bash

|

||||

# 方法1:使用venv(推荐)

|

||||

python3 -m venv maimbot-venv

|

||||

source maimbot-venv/bin/activate # 激活虚拟环境

|

||||

|

||||

# 方法2:使用conda

|

||||

brew install --cask miniconda

|

||||

conda create -n maimbot python=3.9

|

||||

conda activate maimbot # 激活虚拟环境

|

||||

|

||||

# 安装项目依赖

|

||||

# 请确保已经进入虚拟环境再执行

|

||||

pip install -r requirements.txt

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## 数据库配置

|

||||

|

||||

### 3️⃣ **安装MongoDB**

|

||||

|

||||

请参考[官方文档](https://www.mongodb.com/zh-cn/docs/manual/tutorial/install-mongodb-on-os-x/#install-mongodb-community-edition)

|

||||

|

||||

---

|

||||

|

||||

## NapCat

|

||||

|

||||

### 4️⃣ **安装与配置Napcat**

|

||||

- 安装

|

||||

可以使用Napcat官方提供的[macOS安装工具](https://github.com/NapNeko/NapCat-Mac-Installer/releases/)

|

||||

由于权限问题,补丁过程需要手动替换 package.json,请注意备份原文件~

|

||||

- 配置

|

||||

使用QQ小号登录,添加反向WS地址: `ws://127.0.0.1:8080/onebot/v11/ws`

|

||||

|

||||

---

|

||||

|

||||

## 配置文件设置

|

||||

|

||||

### 5️⃣ **生成配置文件**

|

||||

可先运行一次

|

||||

```bash

|

||||

# 在项目目录下操作

|

||||

nb run

|

||||

# 或

|

||||

python3 bot.py

|

||||

```

|

||||

|

||||

之后你就可以找到`.env.prod`和`bot_config.toml`这两个文件了

|

||||

|

||||

关于文件内容的配置请参考:

|

||||

- [🎀 新手配置指南](./installation_cute.md) - 通俗易懂的配置教程,适合初次使用的猫娘

|

||||

- [⚙️ 标准配置指南](./installation_standard.md) - 简明专业的配置说明,适合有经验的用户

|

||||

|

||||

|

||||

---

|

||||

|

||||

## 启动机器人

|

||||

|

||||

### 6️⃣ **启动麦麦机器人**

|

||||

|

||||

```bash

|

||||

# 在项目目录下操作

|

||||

nb run

|

||||

# 或

|

||||

python3 bot.py

|

||||

```

|

||||

|

||||

## 启动管理

|

||||

|

||||

### 7️⃣ **通过launchd管理服务**

|

||||

|

||||

创建plist文件:

|

||||

|

||||

```bash

|

||||

nano ~/Library/LaunchAgents/com.maimbot.plist

|

||||

```

|

||||

|

||||

内容示例(需替换实际路径):

|

||||

|

||||

```xml

|

||||

<?xml version="1.0" encoding="UTF-8"?>

|

||||

<!DOCTYPE plist PUBLIC "-//Apple//DTD PLIST 1.0//EN" "http://www.apple.com/DTDs/PropertyList-1.0.dtd">

|

||||

<plist version="1.0">

|

||||

<dict>

|

||||

<key>Label</key>

|

||||

<string>com.maimbot</string>

|

||||

|

||||

<key>ProgramArguments</key>

|

||||

<array>

|

||||

<string>/path/to/maimbot-venv/bin/python</string>

|

||||

<string>/path/to/MaiMbot/bot.py</string>

|

||||

</array>

|

||||

|

||||

<key>WorkingDirectory</key>

|

||||

<string>/path/to/MaiMbot</string>

|

||||

|

||||

<key>StandardOutPath</key>

|

||||

<string>/tmp/maimbot.log</string>

|

||||

<key>StandardErrorPath</key>

|

||||

<string>/tmp/maimbot.err</string>

|

||||

|

||||

<key>RunAtLoad</key>

|

||||

<true/>

|

||||

<key>KeepAlive</key>

|

||||

<true/>

|

||||

</dict>

|

||||

</plist>

|

||||

```

|

||||

|

||||

加载服务:

|

||||

|

||||

```bash

|

||||

launchctl load ~/Library/LaunchAgents/com.maimbot.plist

|

||||

launchctl start com.maimbot

|

||||

```

|

||||

|

||||

查看日志:

|

||||

|

||||

```bash

|

||||

tail -f /tmp/maimbot.log

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## 常见问题处理

|

||||

|

||||

1. **权限问题**

|

||||

```bash

|

||||

# 遇到文件权限错误时

|

||||

chmod -R 755 ~/Documents/MaiMbot

|

||||

```

|

||||

|

||||

2. **Python模块缺失**

|

||||

```bash

|

||||

# 确保在虚拟环境中

|

||||

source maimbot-venv/bin/activate # 或 conda 激活

|

||||

pip install --force-reinstall -r requirements.txt

|

||||

```

|

||||

|

||||

3. **MongoDB连接失败**

|

||||

```bash

|

||||

# 检查服务状态

|

||||

brew services list

|

||||

# 重置数据库权限

|

||||

mongosh --eval "db.adminCommand({setFeatureCompatibilityVersion: '5.0'})"

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## 系统优化建议

|

||||

|

||||

1. **关闭App Nap**

|

||||

```bash

|

||||

# 防止系统休眠NapCat进程

|

||||

defaults write NSGlobalDomain NSAppSleepDisabled -bool YES

|

||||

```

|

||||

|

||||

2. **电源管理设置**

|

||||

```bash

|

||||

# 防止睡眠影响机器人运行

|

||||

sudo systemsetup -setcomputersleep Never

|

||||

```

|

||||

|

||||

---

|

||||

|

Before Width: | Height: | Size: 47 KiB After Width: | Height: | Size: 47 KiB |

|

Before Width: | Height: | Size: 13 KiB After Width: | Height: | Size: 13 KiB |

|

Before Width: | Height: | Size: 27 KiB After Width: | Height: | Size: 27 KiB |

|

Before Width: | Height: | Size: 31 KiB After Width: | Height: | Size: 31 KiB |

BIN

docs/pic/MongoDB_Ubuntu_guide.png

Normal file

|

After Width: | Height: | Size: 14 KiB |

BIN

docs/pic/QQ_Download_guide_Linux.png

Normal file

|

After Width: | Height: | Size: 37 KiB |

BIN

docs/pic/linux_beginner_downloadguide.png

Normal file

|

After Width: | Height: | Size: 10 KiB |

|

Before Width: | Height: | Size: 107 KiB After Width: | Height: | Size: 107 KiB |

|

Before Width: | Height: | Size: 208 KiB After Width: | Height: | Size: 208 KiB |

|

Before Width: | Height: | Size: 170 KiB After Width: | Height: | Size: 170 KiB |

|

Before Width: | Height: | Size: 133 KiB After Width: | Height: | Size: 133 KiB |

|

Before Width: | Height: | Size: 27 KiB After Width: | Height: | Size: 27 KiB |

@@ -16,7 +16,7 @@

|

||||

|

||||

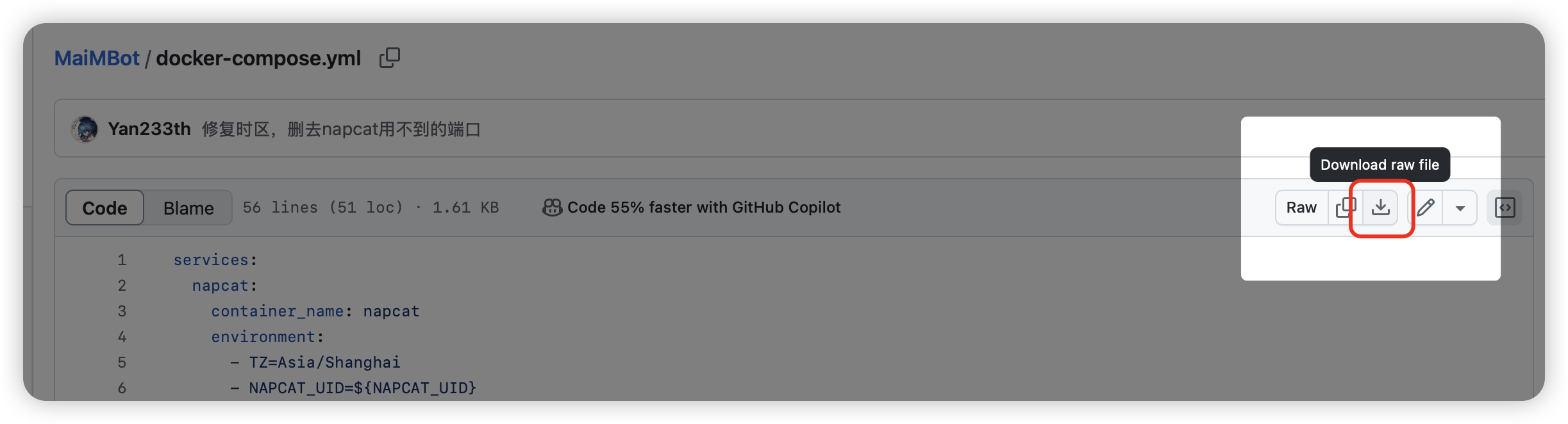

docker-compose.yml: https://github.com/SengokuCola/MaiMBot/blob/main/docker-compose.yml

|

||||

下载后打开,将 `services-mongodb-image` 修改为 `mongo:4.4.24`。这是因为最新的 MongoDB 强制要求 AVX 指令集,而群晖似乎不支持这个指令集

|

||||

|

||||

|

||||

|

||||

bot_config.toml: https://github.com/SengokuCola/MaiMBot/blob/main/template/bot_config_template.toml

|

||||

下载后,重命名为 `bot_config.toml`

|

||||

@@ -26,13 +26,13 @@ bot_config.toml: https://github.com/SengokuCola/MaiMBot/blob/main/template/bot_c

|

||||

下载后,重命名为 `.env.prod`

|

||||

将 `HOST` 修改为 `0.0.0.0`,确保 maimbot 能被 napcat 访问

|

||||

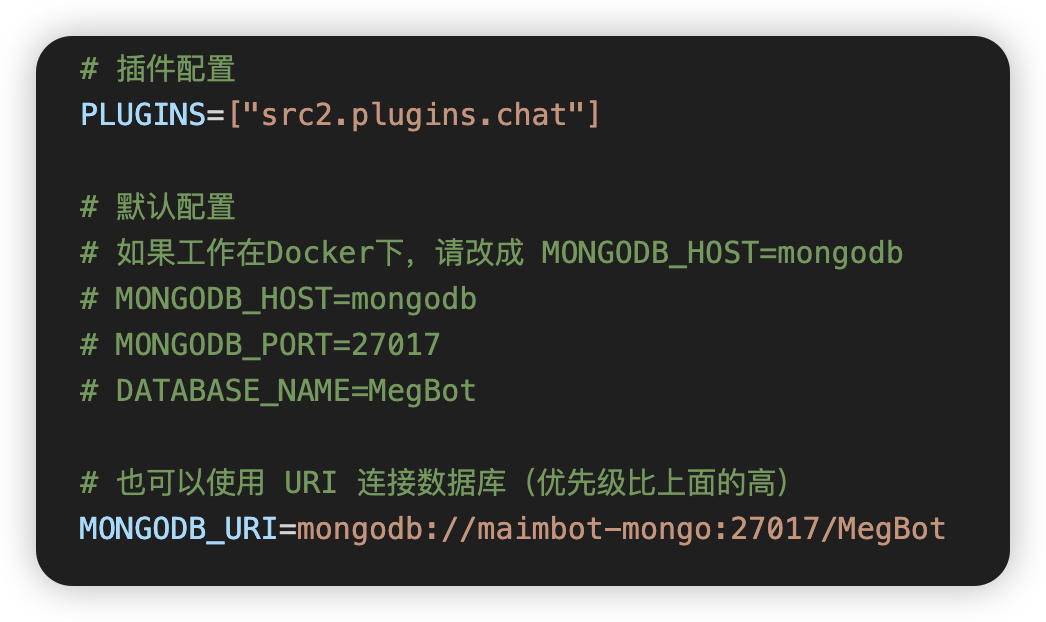

按下图修改 mongodb 设置,使用 `MONGODB_URI`

|

||||

|

||||

|

||||

|

||||

把 `bot_config.toml` 和 `.env.prod` 放入之前创建的 `MaiMBot`文件夹

|

||||

|

||||

#### 如何下载?

|

||||

|

||||

点这里!

|

||||

点这里!

|

||||

|

||||

### 创建项目

|

||||

|

||||

@@ -45,7 +45,7 @@ bot_config.toml: https://github.com/SengokuCola/MaiMBot/blob/main/template/bot_c

|

||||

|

||||

图例:

|

||||

|

||||

|

||||

|

||||

|

||||

一路点下一步,等待项目创建完成

|

||||

|

||||

|

||||

@@ -31,9 +31,10 @@ _handler_registry: Dict[str, List[int]] = {}

|

||||

current_file_path = Path(__file__).resolve()

|

||||

LOG_ROOT = "logs"

|

||||

|

||||

ENABLE_ADVANCE_OUTPUT = False

|

||||

SIMPLE_OUTPUT = os.getenv("SIMPLE_OUTPUT", "false")

|

||||

print(f"SIMPLE_OUTPUT: {SIMPLE_OUTPUT}")

|

||||

|

||||

if ENABLE_ADVANCE_OUTPUT:

|

||||

if not SIMPLE_OUTPUT:

|

||||

# 默认全局配置

|

||||

DEFAULT_CONFIG = {

|

||||

# 日志级别配置

|

||||

@@ -85,7 +86,6 @@ MEMORY_STYLE_CONFIG = {

|

||||

},

|

||||

}

|

||||

|

||||

# 海马体日志样式配置

|

||||

SENDER_STYLE_CONFIG = {

|

||||

"advanced": {

|

||||

"console_format": (

|

||||

@@ -152,17 +152,17 @@ CHAT_STYLE_CONFIG = {

|

||||

"file_format": ("{time:YYYY-MM-DD HH:mm:ss} | {level: <8} | {extra[module]: <15} | 见闻 | {message}"),

|

||||

},

|

||||

"simple": {

|

||||

"console_format": ("<green>{time:MM-DD HH:mm}</green> | <light-blue>见闻</light-blue> | {message}"),

|

||||

"console_format": ("<green>{time:MM-DD HH:mm}</green> | <light-blue>见闻</light-blue> | <green>{message}</green>"), # noqa: E501

|

||||

"file_format": ("{time:YYYY-MM-DD HH:mm:ss} | {level: <8} | {extra[module]: <15} | 见闻 | {message}"),

|

||||

},

|

||||

}

|

||||

|

||||

# 根据ENABLE_ADVANCE_OUTPUT选择配置

|

||||

MEMORY_STYLE_CONFIG = MEMORY_STYLE_CONFIG["advanced"] if ENABLE_ADVANCE_OUTPUT else MEMORY_STYLE_CONFIG["simple"]

|

||||

TOPIC_STYLE_CONFIG = TOPIC_STYLE_CONFIG["advanced"] if ENABLE_ADVANCE_OUTPUT else TOPIC_STYLE_CONFIG["simple"]

|

||||

SENDER_STYLE_CONFIG = SENDER_STYLE_CONFIG["advanced"] if ENABLE_ADVANCE_OUTPUT else SENDER_STYLE_CONFIG["simple"]

|

||||

LLM_STYLE_CONFIG = LLM_STYLE_CONFIG["advanced"] if ENABLE_ADVANCE_OUTPUT else LLM_STYLE_CONFIG["simple"]

|

||||

CHAT_STYLE_CONFIG = CHAT_STYLE_CONFIG["advanced"] if ENABLE_ADVANCE_OUTPUT else CHAT_STYLE_CONFIG["simple"]

|

||||

# 根据SIMPLE_OUTPUT选择配置

|

||||

MEMORY_STYLE_CONFIG = MEMORY_STYLE_CONFIG["simple"] if SIMPLE_OUTPUT else MEMORY_STYLE_CONFIG["advanced"]

|

||||

TOPIC_STYLE_CONFIG = TOPIC_STYLE_CONFIG["simple"] if SIMPLE_OUTPUT else TOPIC_STYLE_CONFIG["advanced"]

|

||||

SENDER_STYLE_CONFIG = SENDER_STYLE_CONFIG["simple"] if SIMPLE_OUTPUT else SENDER_STYLE_CONFIG["advanced"]

|

||||

LLM_STYLE_CONFIG = LLM_STYLE_CONFIG["simple"] if SIMPLE_OUTPUT else LLM_STYLE_CONFIG["advanced"]

|

||||

CHAT_STYLE_CONFIG = CHAT_STYLE_CONFIG["simple"] if SIMPLE_OUTPUT else CHAT_STYLE_CONFIG["advanced"]

|

||||

|

||||

|

||||

def is_registered_module(record: dict) -> bool:

|

||||

|

||||

@@ -98,6 +98,7 @@ async def _(bot: Bot, event: MessageEvent, state: T_State):

|

||||

else:

|

||||

await chat_bot.handle_message(event, bot)

|

||||

|

||||

|

||||

@notice_matcher.handle()

|

||||

async def _(bot: Bot, event: NoticeEvent, state: T_State):

|

||||

logger.debug(f"收到通知:{event}")

|

||||

@@ -110,7 +111,7 @@ async def build_memory_task():

|

||||

"""每build_memory_interval秒执行一次记忆构建"""

|

||||

logger.debug("[记忆构建]------------------------------------开始构建记忆--------------------------------------")

|

||||

start_time = time.time()

|

||||

await hippocampus.operation_build_memory(chat_size=20)

|

||||

await hippocampus.operation_build_memory()

|

||||

end_time = time.time()

|

||||

logger.success(

|

||||

f"[记忆构建]--------------------------记忆构建完成:耗时: {end_time - start_time:.2f} "

|

||||

|

||||

@@ -154,7 +154,7 @@ class ChatBot:

|

||||

)

|

||||

# 开始思考的时间点

|

||||

thinking_time_point = round(time.time(), 2)

|

||||

logger.info(f"开始思考的时间点: {thinking_time_point}")

|

||||

# logger.debug(f"开始思考的时间点: {thinking_time_point}")

|

||||

think_id = "mt" + str(thinking_time_point)

|

||||

thinking_message = MessageThinking(

|

||||

message_id=think_id,

|

||||

@@ -424,7 +424,6 @@ class ChatBot:

|

||||

if event.group_id not in global_config.talk_allowed_groups:

|

||||

return

|

||||

|

||||

|

||||

# 获取合并转发消息的详细信息

|

||||

forward_info = await bot.get_forward_msg(message_id=event.message_id)

|

||||

messages = forward_info["messages"]

|

||||

@@ -456,11 +455,7 @@ class ChatBot:

|

||||

# 构建群聊信息(如果是群聊)

|

||||

group_info = None

|

||||

if isinstance(event, GroupMessageEvent):

|

||||

group_info = GroupInfo(

|

||||

group_id=event.group_id,

|

||||

group_name=None,

|

||||

platform="qq"

|

||||

)

|

||||

group_info = GroupInfo(group_id=event.group_id, group_name=None, platform="qq")

|

||||

|

||||

# 创建消息对象

|

||||

message_cq = MessageRecvCQ(

|

||||

@@ -512,5 +507,6 @@ class ChatBot:

|

||||

else:

|

||||

return f"[{seg_type}]"

|

||||

|

||||

|

||||

# 创建全局ChatBot实例

|

||||

chat_bot = ChatBot()

|

||||

|

||||

@@ -56,7 +56,6 @@ class BotConfig:

|

||||

llm_reasoning: Dict[str, str] = field(default_factory=lambda: {})

|

||||

llm_reasoning_minor: Dict[str, str] = field(default_factory=lambda: {})

|

||||

llm_normal: Dict[str, str] = field(default_factory=lambda: {})

|

||||

llm_normal_minor: Dict[str, str] = field(default_factory=lambda: {})

|

||||

llm_topic_judge: Dict[str, str] = field(default_factory=lambda: {})

|

||||

llm_summary_by_topic: Dict[str, str] = field(default_factory=lambda: {})

|

||||

llm_emotion_judge: Dict[str, str] = field(default_factory=lambda: {})

|

||||

@@ -68,9 +67,9 @@ class BotConfig:

|

||||

MODEL_V3_PROBABILITY: float = 0.1 # V3模型概率

|

||||

MODEL_R1_DISTILL_PROBABILITY: float = 0.1 # R1蒸馏模型概率

|

||||

|

||||

enable_advance_output: bool = False # 是否启用高级输出

|

||||

# enable_advance_output: bool = False # 是否启用高级输出

|

||||

enable_kuuki_read: bool = True # 是否启用读空气功能

|

||||

enable_debug_output: bool = False # 是否启用调试输出

|

||||

# enable_debug_output: bool = False # 是否启用调试输出

|

||||

enable_friend_chat: bool = False # 是否启用好友聊天

|

||||

|

||||

mood_update_interval: float = 1.0 # 情绪更新间隔 单位秒

|

||||

@@ -106,6 +105,11 @@ class BotConfig:

|

||||

memory_forget_time: int = 24 # 记忆遗忘时间(小时)

|

||||

memory_forget_percentage: float = 0.01 # 记忆遗忘比例

|

||||

memory_compress_rate: float = 0.1 # 记忆压缩率

|

||||

build_memory_sample_num: int = 10 # 记忆构建采样数量

|

||||

build_memory_sample_length: int = 20 # 记忆构建采样长度

|

||||

memory_build_distribution: list = field(

|

||||

default_factory=lambda: [4,2,0.6,24,8,0.4]

|

||||

) # 记忆构建分布,参数:分布1均值,标准差,权重,分布2均值,标准差,权重

|

||||

memory_ban_words: list = field(

|

||||

default_factory=lambda: ["表情包", "图片", "回复", "聊天记录"]

|

||||

) # 添加新的配置项默认值

|

||||

@@ -230,7 +234,6 @@ class BotConfig:

|

||||

"llm_reasoning",

|

||||

"llm_reasoning_minor",

|

||||

"llm_normal",

|

||||

"llm_normal_minor",

|

||||

"llm_topic_judge",

|

||||

"llm_summary_by_topic",

|

||||

"llm_emotion_judge",

|

||||

@@ -315,6 +318,20 @@ class BotConfig:

|

||||

"memory_forget_percentage", config.memory_forget_percentage

|

||||

)

|

||||

config.memory_compress_rate = memory_config.get("memory_compress_rate", config.memory_compress_rate)

|

||||

if config.INNER_VERSION in SpecifierSet(">=0.0.11"):

|

||||

config.memory_build_distribution = memory_config.get(

|

||||

"memory_build_distribution",

|

||||

config.memory_build_distribution

|

||||

)

|

||||

config.build_memory_sample_num = memory_config.get(

|

||||

"build_memory_sample_num",

|

||||

config.build_memory_sample_num

|

||||

)

|

||||

config.build_memory_sample_length = memory_config.get(

|

||||

"build_memory_sample_length",

|

||||

config.build_memory_sample_length

|

||||

)

|

||||

|

||||

|

||||

def remote(parent: dict):

|

||||

remote_config = parent["remote"]

|

||||

@@ -351,10 +368,10 @@ class BotConfig:

|

||||

|

||||

def others(parent: dict):

|

||||

others_config = parent["others"]

|

||||

config.enable_advance_output = others_config.get("enable_advance_output", config.enable_advance_output)

|

||||

# config.enable_advance_output = others_config.get("enable_advance_output", config.enable_advance_output)

|

||||

config.enable_kuuki_read = others_config.get("enable_kuuki_read", config.enable_kuuki_read)

|

||||

if config.INNER_VERSION in SpecifierSet(">=0.0.7"):

|

||||

config.enable_debug_output = others_config.get("enable_debug_output", config.enable_debug_output)

|

||||

# config.enable_debug_output = others_config.get("enable_debug_output", config.enable_debug_output)

|

||||

config.enable_friend_chat = others_config.get("enable_friend_chat", config.enable_friend_chat)

|

||||

|

||||

# 版本表达式:>=1.0.0,<2.0.0

|

||||

|

||||

@@ -38,9 +38,9 @@ class EmojiManager:

|

||||

|

||||

def __init__(self):

|

||||

self._scan_task = None

|

||||

self.vlm = LLM_request(model=global_config.vlm, temperature=0.3, max_tokens=1000, request_type="image")

|

||||

self.vlm = LLM_request(model=global_config.vlm, temperature=0.3, max_tokens=1000, request_type="emoji")

|

||||

self.llm_emotion_judge = LLM_request(

|

||||

model=global_config.llm_emotion_judge, max_tokens=600, temperature=0.8, request_type="image"

|

||||

model=global_config.llm_emotion_judge, max_tokens=600, temperature=0.8, request_type="emoji"

|

||||

) # 更高的温度,更少的token(后续可以根据情绪来调整温度)

|

||||

|

||||

def _ensure_emoji_dir(self):

|

||||

@@ -111,7 +111,7 @@ class EmojiManager:

|

||||

if not text_for_search:

|

||||

logger.error("无法获取文本的情绪")

|

||||

return None

|

||||

text_embedding = await get_embedding(text_for_search)

|

||||

text_embedding = await get_embedding(text_for_search, request_type="emoji")

|

||||

if not text_embedding:

|

||||

logger.error("无法获取文本的embedding")

|

||||

return None

|

||||

@@ -242,7 +242,33 @@ class EmojiManager:

|

||||

image_hash = hashlib.md5(image_bytes).hexdigest()

|

||||

image_format = Image.open(io.BytesIO(image_bytes)).format.lower()

|

||||

# 检查是否已经注册过

|

||||

existing_emoji = db["emoji"].find_one({"hash": image_hash})

|

||||

existing_emoji_by_path = db["emoji"].find_one({"filename": filename})

|

||||

existing_emoji_by_hash = db["emoji"].find_one({"hash": image_hash})

|

||||

if existing_emoji_by_path and existing_emoji_by_hash:

|

||||

if existing_emoji_by_path["_id"] != existing_emoji_by_hash["_id"]:

|

||||

logger.error(f"[错误] 表情包已存在但记录不一致: {filename}")

|

||||

db.emoji.delete_one({"_id": existing_emoji_by_path["_id"]})

|

||||

db.emoji.update_one(

|

||||

{"_id": existing_emoji_by_hash["_id"]}, {"$set": {"path": image_path, "filename": filename}}

|

||||

)

|

||||

existing_emoji_by_hash["path"] = image_path

|

||||

existing_emoji_by_hash["filename"] = filename

|

||||

existing_emoji = existing_emoji_by_hash

|

||||

elif existing_emoji_by_hash:

|

||||

logger.error(f"[错误] 表情包hash已存在但path不存在: {filename}")

|

||||

db.emoji.update_one(

|

||||

{"_id": existing_emoji_by_hash["_id"]}, {"$set": {"path": image_path, "filename": filename}}

|

||||

)

|

||||

existing_emoji_by_hash["path"] = image_path

|

||||

existing_emoji_by_hash["filename"] = filename

|

||||

existing_emoji = existing_emoji_by_hash

|

||||

elif existing_emoji_by_path:

|

||||

logger.error(f"[错误] 表情包path已存在但hash不存在: {filename}")

|

||||

db.emoji.delete_one({"_id": existing_emoji_by_path["_id"]})

|

||||

existing_emoji = None

|

||||

else:

|

||||

existing_emoji = None

|

||||

|

||||

description = None

|

||||

|

||||

if existing_emoji:

|

||||

@@ -284,7 +310,7 @@ class EmojiManager:

|

||||

logger.info(f"[检查] 表情包检查通过: {check}")

|

||||

|

||||

if description is not None:

|

||||

embedding = await get_embedding(description)

|

||||

embedding = await get_embedding(description, request_type="emoji")

|

||||

# 准备数据库记录

|

||||

emoji_record = {

|

||||

"filename": filename,

|

||||

@@ -366,6 +392,12 @@ class EmojiManager:

|

||||

logger.warning(f"[检查] 发现缺失记录(缺少hash字段),ID: {emoji.get('_id', 'unknown')}")

|

||||

hash = hashlib.md5(open(emoji["path"], "rb").read()).hexdigest()

|

||||

db.emoji.update_one({"_id": emoji["_id"]}, {"$set": {"hash": hash}})

|

||||

else:

|

||||

file_hash = hashlib.md5(open(emoji["path"], "rb").read()).hexdigest()

|

||||

if emoji["hash"] != file_hash:

|

||||

logger.warning(f"[检查] 表情包文件hash不匹配,ID: {emoji.get('_id', 'unknown')}")

|

||||

db.emoji.delete_one({"_id": emoji["_id"]})

|

||||

removed_count += 1

|

||||

|

||||

except Exception as item_error:

|

||||

logger.error(f"[错误] 处理表情包记录时出错: {str(item_error)}")

|

||||

|

||||

@@ -32,11 +32,19 @@ class ResponseGenerator:

|

||||

temperature=0.7,

|

||||

max_tokens=1000,

|

||||

stream=True,

|

||||

request_type="response",

|

||||

)

|

||||

self.model_v3 = LLM_request(

|

||||

model=global_config.llm_normal, temperature=0.7, max_tokens=3000, request_type="response"

|

||||

)

|

||||

self.model_r1_distill = LLM_request(

|

||||

model=global_config.llm_reasoning_minor, temperature=0.7, max_tokens=3000, request_type="response"

|

||||

)

|

||||

self.model_sum = LLM_request(

|

||||

model=global_config.llm_summary_by_topic, temperature=0.7, max_tokens=3000, request_type="relation"

|

||||

)

|

||||

self.model_v3 = LLM_request(model=global_config.llm_normal, temperature=0.7, max_tokens=3000)

|

||||

self.model_r1_distill = LLM_request(model=global_config.llm_reasoning_minor, temperature=0.7, max_tokens=3000)

|

||||

self.model_v25 = LLM_request(model=global_config.llm_normal_minor, temperature=0.7, max_tokens=3000)

|

||||

self.current_model_type = "r1" # 默认使用 R1

|

||||

self.current_model_name = "unknown model"

|

||||

|

||||

async def generate_response(self, message: MessageThinking) -> Optional[Union[str, List[str]]]:

|

||||

"""根据当前模型类型选择对应的生成函数"""

|

||||

@@ -107,7 +115,7 @@ class ResponseGenerator:

|

||||

|

||||

# 生成回复

|

||||

try:

|

||||

content, reasoning_content = await model.generate_response(prompt)

|

||||

content, reasoning_content, self.current_model_name = await model.generate_response(prompt)

|

||||

except Exception:

|

||||

logger.exception("生成回复时出错")

|

||||

return None

|

||||

@@ -144,7 +152,7 @@ class ResponseGenerator:

|

||||

"chat_id": message.chat_stream.stream_id,

|

||||

"user": sender_name,

|

||||

"message": message.processed_plain_text,

|

||||

"model": self.current_model_type,

|

||||

"model": self.current_model_name,

|

||||

# 'reasoning_check': reasoning_content_check,

|

||||

# 'response_check': content_check,

|

||||

"reasoning": reasoning_content,

|

||||

@@ -174,7 +182,7 @@ class ResponseGenerator:

|

||||

"""

|

||||

|

||||

# 调用模型生成结果

|

||||

result, _ = await self.model_v25.generate_response(prompt)

|

||||

result, _, _ = await self.model_sum.generate_response(prompt)

|

||||

result = result.strip()

|

||||

|

||||

# 解析模型输出的结果

|

||||

@@ -215,7 +223,7 @@ class InitiativeMessageGenerate:

|

||||

topic_select_prompt, dots_for_select, prompt_template = prompt_builder._build_initiative_prompt_select(

|

||||

message.group_id

|

||||

)

|

||||

content_select, reasoning = self.model_v3.generate_response(topic_select_prompt)

|

||||

content_select, reasoning, _ = self.model_v3.generate_response(topic_select_prompt)

|

||||

logger.debug(f"{content_select} {reasoning}")

|

||||

topics_list = [dot[0] for dot in dots_for_select]

|

||||

if content_select:

|

||||

@@ -226,7 +234,7 @@ class InitiativeMessageGenerate:

|

||||

else:

|

||||

return None

|

||||

prompt_check, memory = prompt_builder._build_initiative_prompt_check(select_dot[1], prompt_template)

|

||||

content_check, reasoning_check = self.model_v3.generate_response(prompt_check)

|

||||

content_check, reasoning_check, _ = self.model_v3.generate_response(prompt_check)

|

||||

logger.info(f"{content_check} {reasoning_check}")

|

||||

if "yes" not in content_check.lower():

|

||||

return None

|

||||

|

||||

@@ -220,7 +220,7 @@ class MessageManager:

|

||||

|

||||

message_timeout = container.get_timeout_messages()

|

||||

if message_timeout:

|

||||

logger.warning(f"发现{len(message_timeout)}条超时消息")

|

||||

logger.debug(f"发现{len(message_timeout)}条超时消息")

|

||||

for msg in message_timeout:

|

||||

if msg == message_earliest:

|

||||

continue

|

||||

|

||||

@@ -141,21 +141,21 @@ class PromptBuilder:

|

||||

logger.debug(f"知识检索耗时: {(end_time - start_time):.3f}秒")

|

||||

|

||||

prompt = f"""

|

||||

今天是{current_date},现在是{current_time},你今天的日程是:\

|

||||

`<schedule>`\n

|

||||

{bot_schedule.today_schedule}\n

|

||||

`</schedule>`\n

|

||||

{prompt_info}\n

|

||||

{memory_prompt}\n

|

||||

{chat_target}\n

|

||||

{chat_talking_prompt}\n

|

||||

现在"{sender_name}"说的:\n

|

||||

`<UserMessage>`\n

|

||||

{message_txt}\n

|

||||

`</UserMessage>`\n

|

||||

今天是{current_date},现在是{current_time},你今天的日程是:

|

||||

`<schedule>`

|

||||

{bot_schedule.today_schedule}

|

||||

`</schedule>`

|

||||

{prompt_info}

|

||||

{memory_prompt}

|

||||

{chat_target}

|

||||

{chat_talking_prompt}

|

||||

现在"{sender_name}"说的:

|

||||

`<UserMessage>`

|

||||

{message_txt}

|

||||

`</UserMessage>`

|

||||

引起了你的注意,{relation_prompt_all}{mood_prompt}\n

|

||||

`<MainRule>`

|

||||

你的网名叫{global_config.BOT_NICKNAME},{prompt_personality}。

|

||||

你的网名叫{global_config.BOT_NICKNAME},有人也叫你{"/".join(global_config.BOT_ALIAS_NAMES)},{prompt_personality},{prompt_personality}。

|

||||

正在{bot_schedule_now_activity}的你同时也在一边{chat_target_2},现在请你读读之前的聊天记录,然后给出日常且口语化的回复,平淡一些,

|

||||

尽量简短一些。{keywords_reaction_prompt}请注意把握聊天内容,不要刻意突出自身学科背景,不要回复的太有条理,可以有个性。

|

||||

{prompt_ger}

|

||||

|

||||

@@ -33,7 +33,7 @@ class TopicIdentifier:

|

||||

消息内容:{text}"""

|

||||

|

||||

# 使用 LLM_request 类进行请求

|

||||

topic, _ = await self.llm_topic_judge.generate_response(prompt)

|

||||

topic, _, _ = await self.llm_topic_judge.generate_response(prompt)

|

||||

|

||||

if not topic:

|

||||

logger.error("LLM API 返回为空")

|

||||

|

||||

@@ -55,9 +55,9 @@ def is_mentioned_bot_in_message(message: MessageRecv) -> bool:

|

||||

return False

|

||||

|

||||

|

||||

async def get_embedding(text):

|

||||

async def get_embedding(text, request_type="embedding"):

|

||||

"""获取文本的embedding向量"""

|

||||

llm = LLM_request(model=global_config.embedding, request_type="embedding")

|

||||

llm = LLM_request(model=global_config.embedding, request_type=request_type)

|

||||

# return llm.get_embedding_sync(text)

|

||||

return await llm.get_embedding(text)

|

||||

|

||||

@@ -76,18 +76,11 @@ def calculate_information_content(text):

|

||||

|

||||

|

||||

def get_closest_chat_from_db(length: int, timestamp: str):

|

||||

"""从数据库中获取最接近指定时间戳的聊天记录

|

||||

|

||||

Args:

|

||||

length: 要获取的消息数量

|

||||

timestamp: 时间戳

|

||||

|

||||

Returns:

|

||||

list: 消息记录列表,每个记录包含时间和文本信息

|

||||

"""

|

||||

# print(f"获取最接近指定时间戳的聊天记录,长度: {length}, 时间戳: {timestamp}")

|

||||

# print(f"当前时间: {timestamp},转换后时间: {time.strftime('%Y-%m-%d %H:%M:%S', time.localtime(timestamp))}")

|

||||

chat_records = []

|

||||

closest_record = db.messages.find_one({"time": {"$lte": timestamp}}, sort=[("time", -1)])

|

||||

|

||||

# print(f"最接近的记录: {closest_record}")

|

||||

if closest_record:

|

||||

closest_time = closest_record["time"]

|

||||

chat_id = closest_record["chat_id"] # 获取chat_id

|

||||

@@ -102,7 +95,9 @@ def get_closest_chat_from_db(length: int, timestamp: str):

|

||||

.sort("time", 1)

|

||||

.limit(length)

|

||||

)

|

||||

|

||||

# print(f"获取到的记录: {chat_records}")

|

||||

length = len(chat_records)

|

||||

# print(f"获取到的记录长度: {length}")

|

||||

# 转换记录格式

|

||||

formatted_records = []

|

||||

for record in chat_records:

|

||||

@@ -319,7 +314,7 @@ def split_into_sentences_w_remove_punctuation(text: str) -> List[str]:

|

||||

sentence = sentence.replace(",", " ").replace(",", " ")

|

||||

sentences_done.append(sentence)

|

||||

|

||||

logger.info(f"处理后的句子: {sentences_done}")

|

||||

logger.debug(f"处理后的句子: {sentences_done}")

|

||||

return sentences_done

|

||||

|

||||

|

||||

|

||||

@@ -112,7 +112,7 @@ class ImageManager:

|

||||

# 查询缓存的描述

|

||||

cached_description = self._get_description_from_db(image_hash, "emoji")

|

||||

if cached_description:

|

||||

logger.info(f"缓存表情包描述: {cached_description}")

|

||||

logger.debug(f"缓存表情包描述: {cached_description}")

|

||||

return f"[表情包:{cached_description}]"

|

||||

|

||||

# 调用AI获取描述

|

||||

@@ -184,7 +184,7 @@ class ImageManager:

|

||||

logger.warning(f"虽然生成了描述,但是找到缓存图片描述 {cached_description}")

|

||||

return f"[图片:{cached_description}]"

|

||||

|

||||

logger.info(f"描述是{description}")

|

||||

logger.debug(f"描述是{description}")

|

||||

|

||||

if description is None:

|

||||

logger.warning("AI未能生成图片描述")

|

||||

|

||||

@@ -18,6 +18,7 @@ from ..chat.utils import (

|

||||

)

|

||||

from ..models.utils_model import LLM_request

|

||||

from src.common.logger import get_module_logger, LogConfig, MEMORY_STYLE_CONFIG

|

||||

from src.plugins.memory_system.sample_distribution import MemoryBuildScheduler

|

||||

|

||||

# 定义日志配置

|

||||

memory_config = LogConfig(

|

||||

@@ -25,6 +26,11 @@ memory_config = LogConfig(

|

||||

console_format=MEMORY_STYLE_CONFIG["console_format"],

|

||||

file_format=MEMORY_STYLE_CONFIG["file_format"],

|

||||

)

|

||||

# print(f"memory_config: {memory_config}")

|

||||

# print(f"MEMORY_STYLE_CONFIG: {MEMORY_STYLE_CONFIG}")

|

||||

# print(f"MEMORY_STYLE_CONFIG['console_format']: {MEMORY_STYLE_CONFIG['console_format']}")

|

||||

# print(f"MEMORY_STYLE_CONFIG['file_format']: {MEMORY_STYLE_CONFIG['file_format']}")

|

||||

|

||||

|

||||

logger = get_module_logger("memory_system", config=memory_config)

|

||||

|

||||

@@ -168,9 +174,9 @@ class Memory_graph:

|

||||

class Hippocampus:

|

||||

def __init__(self, memory_graph: Memory_graph):

|

||||

self.memory_graph = memory_graph

|

||||

self.llm_topic_judge = LLM_request(model=global_config.llm_topic_judge, temperature=0.5, request_type="topic")

|

||||

self.llm_topic_judge = LLM_request(model=global_config.llm_topic_judge, temperature=0.5, request_type="memory")

|

||||

self.llm_summary_by_topic = LLM_request(

|

||||

model=global_config.llm_summary_by_topic, temperature=0.5, request_type="topic"

|

||||

model=global_config.llm_summary_by_topic, temperature=0.5, request_type="memory"

|

||||

)

|

||||

|

||||

def get_all_node_names(self) -> list:

|

||||

@@ -195,25 +201,17 @@ class Hippocampus:

|

||||

return hash(f"{nodes[0]}:{nodes[1]}")

|

||||

|

||||

def random_get_msg_snippet(self, target_timestamp: float, chat_size: int, max_memorized_time_per_msg: int) -> list:

|

||||

"""随机抽取一段时间内的消息片段

|

||||

Args:

|

||||

- target_timestamp: 目标时间戳

|

||||

- chat_size: 抽取的消息数量

|

||||

- max_memorized_time_per_msg: 每条消息的最大记忆次数

|

||||

|

||||

Returns:

|

||||

- list: 抽取出的消息记录列表

|

||||

|

||||

"""

|

||||

try_count = 0

|

||||

# 最多尝试三次抽取

|

||||

# 最多尝试2次抽取

|

||||

while try_count < 3:

|

||||

messages = get_closest_chat_from_db(length=chat_size, timestamp=target_timestamp)

|

||||

if messages:

|

||||

# print(f"抽取到的消息: {messages}")

|

||||

# 检查messages是否均没有达到记忆次数限制

|

||||

for message in messages:

|

||||

if message["memorized_times"] >= max_memorized_time_per_msg:

|

||||

messages = None

|

||||

# print(f"抽取到的消息提取次数达到限制,跳过")

|

||||

break

|

||||

if messages:

|

||||

# 成功抽取短期消息样本

|

||||

@@ -224,63 +222,48 @@ class Hippocampus:

|

||||

)

|

||||

return messages

|

||||

try_count += 1

|

||||

# 三次尝试均失败

|

||||

return None

|

||||

|

||||

def get_memory_sample(self, chat_size=20, time_frequency=None):

|

||||

"""获取记忆样本

|

||||

|

||||

Returns:

|

||||

list: 消息记录列表,每个元素是一个消息记录字典列表

|

||||

"""

|

||||

def get_memory_sample(self):

|

||||

# 硬编码:每条消息最大记忆次数

|

||||

# 如有需求可写入global_config

|

||||

if time_frequency is None:

|

||||

time_frequency = {"near": 2, "mid": 4, "far": 3}

|

||||

max_memorized_time_per_msg = 3

|

||||

|

||||

current_timestamp = datetime.datetime.now().timestamp()

|

||||

# 创建双峰分布的记忆调度器

|

||||

scheduler = MemoryBuildScheduler(

|

||||

n_hours1=global_config.memory_build_distribution[0], # 第一个分布均值(4小时前)

|

||||

std_hours1=global_config.memory_build_distribution[1], # 第一个分布标准差

|

||||

weight1=global_config.memory_build_distribution[2], # 第一个分布权重 60%

|

||||

n_hours2=global_config.memory_build_distribution[3], # 第二个分布均值(24小时前)

|

||||

std_hours2=global_config.memory_build_distribution[4], # 第二个分布标准差

|

||||

weight2=global_config.memory_build_distribution[5], # 第二个分布权重 40%

|

||||

total_samples=global_config.build_memory_sample_num # 总共生成10个时间点

|

||||

)

|

||||

|

||||

# 生成时间戳数组

|

||||

timestamps = scheduler.get_timestamp_array()

|

||||

# logger.debug(f"生成的时间戳数组: {timestamps}")

|

||||

# print(f"生成的时间戳数组: {timestamps}")

|

||||

# print(f"时间戳的实际时间: {[time.strftime('%Y-%m-%d %H:%M:%S', time.localtime(ts)) for ts in timestamps]}")

|

||||

logger.info(f"回忆往事: {[time.strftime('%Y-%m-%d %H:%M:%S', time.localtime(ts)) for ts in timestamps]}")

|

||||

chat_samples = []

|

||||

|

||||

# 短期:1h 中期:4h 长期:24h

|

||||

logger.debug("正在抽取短期消息样本")

|

||||

for i in range(time_frequency.get("near")):

|

||||

random_time = current_timestamp - random.randint(1, 3600)

|

||||

messages = self.random_get_msg_snippet(random_time, chat_size, max_memorized_time_per_msg)

|

||||

for timestamp in timestamps:

|

||||

messages = self.random_get_msg_snippet(

|

||||

timestamp,

|

||||

global_config.build_memory_sample_length,

|

||||

max_memorized_time_per_msg

|

||||

)

|

||||

if messages:

|

||||

logger.debug(f"成功抽取短期消息样本{len(messages)}条")

|

||||

time_diff = (datetime.datetime.now().timestamp() - timestamp) / 3600

|

||||

logger.debug(f"成功抽取 {time_diff:.1f} 小时前的消息样本,共{len(messages)}条")

|

||||

# print(f"成功抽取 {time_diff:.1f} 小时前的消息样本,共{len(messages)}条")

|

||||

chat_samples.append(messages)

|

||||

else:

|

||||

logger.warning(f"第{i}次短期消息样本抽取失败")

|

||||

|

||||

logger.debug("正在抽取中期消息样本")

|

||||

for i in range(time_frequency.get("mid")):

|

||||

random_time = current_timestamp - random.randint(3600, 3600 * 4)

|

||||

messages = self.random_get_msg_snippet(random_time, chat_size, max_memorized_time_per_msg)

|

||||

if messages:

|

||||

logger.debug(f"成功抽取中期消息样本{len(messages)}条")

|

||||

chat_samples.append(messages)

|

||||

else:

|

||||

logger.warning(f"第{i}次中期消息样本抽取失败")

|

||||

|

||||

logger.debug("正在抽取长期消息样本")

|

||||

for i in range(time_frequency.get("far")):

|

||||

random_time = current_timestamp - random.randint(3600 * 4, 3600 * 24)

|

||||

messages = self.random_get_msg_snippet(random_time, chat_size, max_memorized_time_per_msg)

|

||||

if messages:

|

||||

logger.debug(f"成功抽取长期消息样本{len(messages)}条")

|

||||

chat_samples.append(messages)

|

||||

else:

|

||||

logger.warning(f"第{i}次长期消息样本抽取失败")

|

||||

logger.debug(f"时间戳 {timestamp} 的消息样本抽取失败")

|

||||

|

||||

return chat_samples

|

||||

|

||||

async def memory_compress(self, messages: list, compress_rate=0.1):

|

||||

"""压缩消息记录为记忆

|

||||

|

||||

Returns:

|

||||

tuple: (压缩记忆集合, 相似主题字典)

|

||||

"""

|

||||

if not messages:

|

||||

return set(), {}

|

||||

|

||||

@@ -313,15 +296,23 @@ class Hippocampus:

|

||||

topics_response = await self.llm_topic_judge.generate_response(self.find_topic_llm(input_text, topic_num))

|

||||

|

||||

# 过滤topics

|

||||

# 从配置文件获取需要过滤的关键词列表

|

||||

filter_keywords = global_config.memory_ban_words

|

||||

|

||||

# 将topics_response[0]中的中文逗号、顿号、空格都替换成英文逗号

|

||||

# 然后按逗号分割成列表,并去除每个topic前后的空白字符

|

||||

topics = [

|

||||

topic.strip()

|

||||

for topic in topics_response[0].replace(",", ",").replace("、", ",").replace(" ", ",").split(",")

|

||||

if topic.strip()

|

||||

]

|

||||

|

||||

# 过滤掉包含禁用关键词的topic

|

||||

# any()检查topic中是否包含任何一个filter_keywords中的关键词

|

||||

# 只保留不包含禁用关键词的topic

|

||||

filtered_topics = [topic for topic in topics if not any(keyword in topic for keyword in filter_keywords)]

|

||||

|

||||

logger.info(f"过滤后话题: {filtered_topics}")

|

||||

logger.debug(f"过滤后话题: {filtered_topics}")

|

||||

|

||||

# 创建所有话题的请求任务

|

||||

tasks = []

|

||||

@@ -331,31 +322,42 @@ class Hippocampus:

|

||||

tasks.append((topic.strip(), task))

|

||||

|

||||

# 等待所有任务完成

|

||||

compressed_memory = set()

|

||||

# 初始化压缩后的记忆集合和相似主题字典

|

||||

compressed_memory = set() # 存储压缩后的(主题,内容)元组

|

||||

similar_topics_dict = {} # 存储每个话题的相似主题列表

|

||||

|

||||

# 遍历每个主题及其对应的LLM任务

|

||||

for topic, task in tasks:

|

||||

response = await task

|

||||

if response:

|

||||

# 将主题和LLM生成的内容添加到压缩记忆中

|

||||

compressed_memory.add((topic, response[0]))

|

||||

# 为每个话题查找相似的已存在主题

|

||||

|

||||

# 为当前主题寻找相似的已存在主题

|

||||

existing_topics = list(self.memory_graph.G.nodes())

|

||||

similar_topics = []

|

||||

|

||||

# 计算当前主题与每个已存在主题的相似度

|

||||

for existing_topic in existing_topics:

|

||||

# 使用jieba分词,将主题转换为词集合

|

||||

topic_words = set(jieba.cut(topic))

|

||||

existing_words = set(jieba.cut(existing_topic))

|

||||

|

||||

all_words = topic_words | existing_words

|

||||

v1 = [1 if word in topic_words else 0 for word in all_words]

|

||||

v2 = [1 if word in existing_words else 0 for word in all_words]

|

||||

# 构建词向量用于计算余弦相似度

|

||||

all_words = topic_words | existing_words # 所有不重复的词

|

||||

v1 = [1 if word in topic_words else 0 for word in all_words] # 当前主题的词向量

|

||||

v2 = [1 if word in existing_words else 0 for word in all_words] # 已存在主题的词向量

|

||||

|

||||

# 计算余弦相似度

|

||||

similarity = cosine_similarity(v1, v2)

|

||||

|

||||

if similarity >= 0.6:

|

||||

# 如果相似度超过阈值,添加到相似主题列表

|

||||

if similarity >= 0.7:

|

||||

similar_topics.append((existing_topic, similarity))

|

||||

|

||||

# 按相似度降序排序,只保留前3个最相似的主题

|

||||

similar_topics.sort(key=lambda x: x[1], reverse=True)

|

||||

similar_topics = similar_topics[:5]

|

||||

similar_topics = similar_topics[:3]

|

||||

similar_topics_dict[topic] = similar_topics

|

||||

|

||||

return compressed_memory, similar_topics_dict

|

||||

@@ -372,10 +374,13 @@ class Hippocampus:

|

||||

)

|

||||

return topic_num

|

||||

|

||||

async def operation_build_memory(self, chat_size=20):

|

||||

time_frequency = {"near": 1, "mid": 4, "far": 4}

|

||||

memory_samples = self.get_memory_sample(chat_size, time_frequency)

|

||||

|

||||

async def operation_build_memory(self):

|

||||

logger.debug("------------------------------------开始构建记忆--------------------------------------")

|

||||

start_time = time.time()

|

||||

memory_samples = self.get_memory_sample()

|

||||

all_added_nodes = []

|

||||

all_connected_nodes = []

|

||||

all_added_edges = []

|

||||

for i, messages in enumerate(memory_samples, 1):

|

||||

all_topics = []

|

||||

# 加载进度可视化

|

||||

@@ -387,12 +392,14 @@ class Hippocampus:

|

||||

|

||||

compress_rate = global_config.memory_compress_rate

|

||||

compressed_memory, similar_topics_dict = await self.memory_compress(messages, compress_rate)

|

||||

logger.info(f"压缩后记忆数量: {len(compressed_memory)},似曾相识的话题: {len(similar_topics_dict)}")

|

||||

logger.debug(f"压缩后记忆数量: {compressed_memory},似曾相识的话题: {similar_topics_dict}")

|

||||

|

||||

current_time = datetime.datetime.now().timestamp()

|

||||

logger.debug(f"添加节点: {', '.join(topic for topic, _ in compressed_memory)}")

|

||||

all_added_nodes.extend(topic for topic, _ in compressed_memory)

|

||||

# all_connected_nodes.extend(topic for topic, _ in similar_topics_dict)

|

||||

|

||||

for topic, memory in compressed_memory:

|

||||

logger.info(f"添加节点: {topic}")

|

||||

self.memory_graph.add_dot(topic, memory)

|

||||

all_topics.append(topic)

|

||||

|

||||

@@ -402,7 +409,13 @@ class Hippocampus:

|

||||

for similar_topic, similarity in similar_topics:

|

||||

if topic != similar_topic:

|

||||

strength = int(similarity * 10)

|

||||

logger.info(f"连接相似节点: {topic} 和 {similar_topic} (强度: {strength})")

|

||||

|

||||

logger.debug(f"连接相似节点: {topic} 和 {similar_topic} (强度: {strength})")

|

||||

all_added_edges.append(f"{topic}-{similar_topic}")

|

||||

|

||||

all_connected_nodes.append(topic)

|

||||